In this article we look at the C build process – that is, how we get from C source files to executable code, programmed on the target. It wasn’t so long ago this was common knowledge (the halcyon days of the hand-crafted make file!) but modern IDEs are making this knowledge ever-more arcane.

Compilation

The first stage of the build process is compilation.

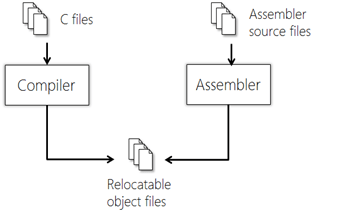

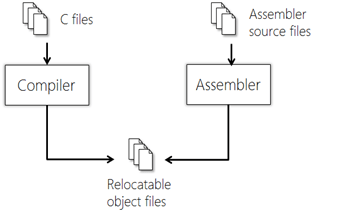

The compiler is responsible for allocating memory for definitions (static and automatic) and generating opcodes from program statements. A relocatable object file (.o) is produced. The assembler also produces .o files from assembly-language source.

The compiler works with one translation unit at a time. A translation unit is a .c file that has passed through the pre-processor.

The compiler and assembler create relocatable object files (.o)

A Librarian facility may be used to take the object files and combine them into a library file.

Compilation stages

Compilation is a multi-stage process; each stage working with the output of the previous. The Compiler itself is normally broken down into three parts:

- The front end, responsible for parsing the source code

- The middle end, responsible for optimisation

- The back end, responsible for code generation

Front End Processing:

Pre-processing

The pre-processor parses the source code file and evaluates pre-processor directives (starting with a #) – for example #define. A typical function of the pre-processor is to#include function / type declarations from header files. The input to the pre-processor is known as a pre-processed translation unit; the output from the pre-processor is a post-processed translation unit.

Whitespace removal

C ignores whitespace so the first stage of processing the translation unit is to strip out all whitespace.

Tokenising

A C program is made up of tokens. A token may be

- a keyword (for example ‘while’)

- an operator (for example, ‘*’)

- an identifier; a variable name

- a literal (for example, 10 or “my string”)

- a comment (which is discarded at this point)

Syntax analysis

Syntax analysis ensures that tokens are organised in the correct way, according to the rules of the language. If not, the compiler will produce a syntax error at this point. The output of syntax analysis is a data structure known as a parse tree.

Intermediate Representation

The output from the compiler front end is a functionally equivalent program expressed in some machine-independent form known as an Intermediate Representation (IR). The IR program is generated from the parse tree.

IR allows the compiler vendor to support multiple different languages (for example C and C++) on multiple targets without having n * m combinations of toolchain.

There are several IRs in use, for example Gimple, used by GCC. IRs are typically in the form of an Abstract Syntax Tree (AST) or pseudo-code.

Middle End Processing:

Semantic analysis

Semantic analysis adds further semantic information to the IR AST and performs checks on the logical structure of the program. The type and amount of semantic analysis performed varies from compiler to compiler but most modern compilers are able to detect potential problems such as unused variables, uninitialized variables, etc. Any problems found at this stage are normally presented as warnings, rather than errors.

It is normally at this stage the program symbol table is constructed, and any debug information inserted.

Optimisation

Optimisation transforms the code into a functionally-equivalent, but smaller or faster form. Optimisation is usually a multi-level process. Common optimisations include inline expansion of functions, dead code removal, loop unrolling, register allocation, etc.

Back End Processing:

Code generation

Code generation converts the optimised IR code structure into native opcodes for the target platform.

Memory allocation

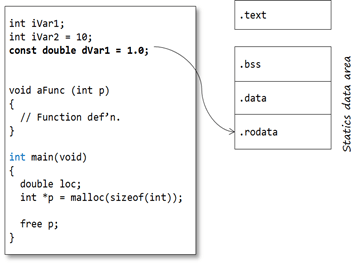

The C compiler allocates memory for code and data in Sections. Each section contains a different type of information. Sections may be identified by name and/or with attributes that identify the type of information contained within. This attribute information is used by the Linker for locating sections in memory (see later).

Code

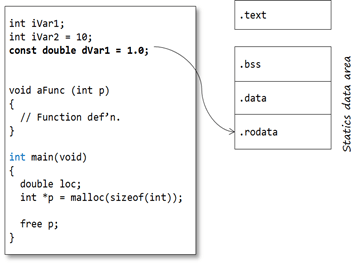

Opcodes generated by the compiler are stored in their own memory section, typically known as .code or .text

Static data

The static data region is actually subdivided into two further sections:

- one for uninitialized-definitions (int iVar1;).

- one for initialised-definitions (int iVar2 = 10;)

So it would not be unexpected for the address of iVar1 and iVar2 to not be adjacent to each other in memory.

The uninitialized-definitions’ section is commonly known as the .bss or ZI section. The initialised-definitions’ section is commonly known as the .data or RW section.

Constants

Constants may come in two forms:

- User-defined constant objects (for example const int c;)

- Literals (‘magic numbers’, macro definitions or strings)

The traditional C model places user-defined const objects in the .data section, along with non-const statics (so they may not be truly constant – this is why C disallows using constant integers to initialise arrays, for example)

Literals are commonly placed in the .text / .code section. Most compilers will optimise numeric literals away and use their values directly where possible.

Many modern C toolchains support a separate .const / .rodata section specifically for constant values. This section can be placed (in ROM) separate from the .data section. Strictly, this is a toolchain extension.

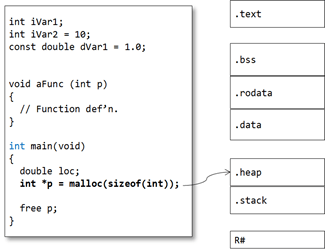

Automatic variables

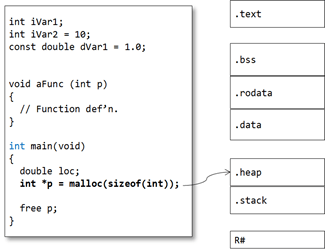

The majority of variables are defined within functions and classed as automatic variables. This also includes parameters and any temporary-returned-object (TRO) from a non-void function.

The default model in general programming is that the memory for these program objects is allocated from the stack. For parameters and TRO’s the memory is normally allocated by the calling function (by pushing values onto the stack), whereas for local objects, memory is allocated once the function is called. This key feature enables a function to call itself – recursion (though recursion is generally a bad idea in embedded programming as it may cause stack-overflow problems). In this model, automatic memory is reclaimed by popping the stack on function exit.

It is important to note that the compiler does NOT create a .stack segment. Instead, opcodes are generated that access memory relative to some register, the Stack Pointer, which is configured at program start-up to point to the top of the stack segment (see below)

However, on most modern microcontrollers, especially 32-bit RISC architectures, automatics are stored in scratch registers, where possible, rather than the stack. For example the ARM Architecture Procedure Call Standard (AAPCS) defines which CPU registers are used for function call arguments into, and results from, a function and local variables.

Dynamic data

Memory for dynamic objects is allocated from a section known as the Heap. As with the Stack, the Heap is not allocated by the compiler at compile time but by the Linker at link-time.

Object files

The compiler produces relocatable object files – .o files.

The object file contains the compiled source code – opcodes and data sections. Note that the object file only contains the sections for static variables. At this stage, section locations are not fixed.

The .o file is not (yet) executable because, although some items are set in concrete (for example: instruction opcodes, pc-relative addresses, “immediate” constants, etc.), static and global addresses are known only as offsets from the starts of their relevant sections. Also, addresses defined in other modules are not known at all, except by name. The object file contains two tables – Imports and Exports:

- Exports contains any extern identifiers defined within this translation unit (so no statics!)

- Imports contains any identifiers declared (and used) within the translation; but not defined within it.

Note the identifier names are in name-mangled form.

Linking

The Linker combines the (compiled) object files into a single executable program. In order to do that it must perform a number of tasks.

Symbol resolution

The primary function of the Linker (from whence it derives its name) is to resolve references between object files – that is, to ensure each symbol defined by the program has a unique address.

If any references remain unresolved, all specified library/archive (.a) files are searched and the appropriate modules are gathered in order to resolve those references. This is an iterative process. If, after this, the Linker still cannot resolve a symbol it will report an ‘unresolved reference’ error.

Be careful, though: the C standard specifies that all external objects must have at least one definition in all object files. This means that a compiler can assume that the same symbol defined in two translation units must refer to the same object! Unlike C++, C does not strictly enforce a ‘One Definition Rule’ on global variables; although a ‘sensible’ toolchain probably should!

Section concatenation

The Linker then concatenates like-named sections from the input object files.

The combined sections (output sections) are usually given the same names as their input sections. Program addresses are adjusted to take account of the concatenation.

Section location

To be executable code and data sections must be located at absolute addresses in memory. Each section is given an absolute address in memory. This can be done on a section-by-section basis but more commonly sections are concatenated from some base address. Normally there is one base address in non-volatile memory for persistent sections (for example code) and one address in volatile memory for non-persistent sections (for example the Stack).

Data initialisation

On an embedded system any initialised data must be stored in non-volatile memory (Flash / ROM). On startup any non-const data must be copied to RAM. It is also very common to copy read-only sections like code to RAM to speed up execution (not shown in this example).

In order to achieve this the Linker must create extra sections to enable copying from ROM to RAM. Each section that is to be initialized by copying is divided into two, one for the ROM part (the initialisation section) and one for the RAM part (the run-time location). The initialisation section generated by the Linker is commonly called a shadow data section – .sdata in our example (although it may have other names).

If manual initialization is not used, the linker also arranges for the startup code to perform the initialization.

The .bss section is also located in RAM but does not have a shadow copy in ROM. A shadow copy is unnecessary, since the .bss section contains only zeroes. This section can be initialised algorithmically as part of the startup code.

Linker control

The detailed operation of the linker can be controlled by invocation (command-line) options or by a Linker Control File (LCF).

You may know this file by another name such as linker-script file, linker configuration file or even scatter-loading description file. The LCF file defines the physical memory layout (Flash/SRAM) and placement of the different program regions. LCF syntax is highly compiler-dependent, so each will have its own format; although the role performed by the LCF is largely the same in all cases.

When an IDE is used, these options can usually be specified in a relatively friendly way. The IDE then generates the necessary script and invocation options.

The most important thing to control is where the final memory sections are located. The hardware memory layout must obviously be respected – for most processors, certain things must be in specific places.

Secondly, the LCF specifies the size and location of the Stack and Heap (if dynamic memory is used). It is common practice to locate the Stack and Heap with the Heap at the lower address in RAM and the Stack at a higher address to minimise the potential for the two areas overlapping (remember, the Heap grows up the memory and the Stack grows down) and corrupting each other at run-time.

The linker configuration file shown above leads to a fairly typical memory layout shown here.

- .cstartup – the system boot code – is explicitly located at the start of Flash.

- .text and .rodata are located in Flash, since they need to be persistent

- .stack and .heap are located in RAM.

- .bss is located in RAM in this case but is (probably) empty at this point. It will be initialised to zero at start-up.

- The .data section is located in RAM (for run-time) but its initialisation section, .sdata, is in ROM.

The Linker will perform checks to ensure that your code and data sections will fit into the designated regions of memory.

The output from the locating process is a load file in a platform-independent format, commonly .ELF or .DWARF (although there are many others)

The ELF file is also used by the debugger when performing source-code debugging.

Loading

ELF or DWARF are target-independent output file formats. In order to be loaded onto the target the ELF file must be converted into a native flash / PROM format (typically, .bin or .hex)

Key points

- The compiler produces opcodes and data allocation from source code files to produce an object file.

- The compiler works on a single translation unit at a time.

- The linker concatenates object files and library files to create a program

- The linker is responsible for allocating stack and free store sections

- The linker operation is controlled by a configuration file, unique to the target system.

- Linked files must be translated to a target-dependent format for loading onto the target.